About

Tom Kelly is a research scientist and software engineer in subjects around graphics, machine learning, and urban environments. He does things like generative AI, procedural modelling, software engineering, and creating synthetic data. Get in touch.

Publications

WinSyn: A High Resolution Testbed for Synthetic Data

Tom Kelly; John Femiani; Peter Wonka

in: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024.

@article{kelly2023winsyn,

title = {WinSyn: A High Resolution Testbed for Synthetic Data},

author = {Tom Kelly and John Femiani and Peter Wonka},

url = {https://twak.github.io/winsyn/

https://github.com/twak/winsyn_metadata

https://github.com/twak/winsyn},

year = {2024},

date = {2024-03-01},

journal = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

}

FacadeNet: Conditional Facade Synthesis via Selective Editing

Yiangos Georgiou; Marios Loizou; Tom Kelly; Melinos Averkiou

in: Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, pp. 5384–5393, 2024.

@inproceedings{georgiou2024facadenet,

title = {FacadeNet: Conditional Facade Synthesis via Selective Editing},

author = {Yiangos Georgiou and Marios Loizou and Tom Kelly and Melinos Averkiou},

url = {https://ygeorg01.github.io/FacadeNet/},

doi = {https://tinyurl.com/2ezat87y},

year = {2024},

date = {2024-01-01},

booktitle = {Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision},

pages = {5384–5393},

}

Large-Scale Auto-Regressive Modeling Of Street Networks

Michael Birsak; Tom Kelly; Wamiq Para; Peter Wonka

in: arXiv preprint, 2022.

@article{birsak2022large,

title = {Large-Scale Auto-Regressive Modeling Of Street Networks},

author = {Michael Birsak and Tom Kelly and Wamiq Para and Peter Wonka},

doi = {https://arxiv.org/abs/2209.00281},

year = {2022},

date = {2022-01-01},

journal = {arXiv preprint},

}

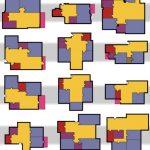

Generative Layout Modeling using Constraint Graphs

Wamiq Para; Paul Guerrero; Tom Kelly; Leonidas Guibas; Peter Wonka

in: Proceedings of the IEEE/CVF international conference on computer vision, pp. 6690–6700, 2021.

@article{para2020generative,

title = {Generative Layout Modeling using Constraint Graphs},

author = {Wamiq Para and Paul Guerrero and Tom Kelly and Leonidas Guibas and Peter Wonka},

doi = {https://tinyurl.com/4nb59s9a},

year = {2021},

date = {2021-01-01},

journal = {Proceedings of the IEEE/CVF international conference on computer vision},

pages = {6690--6700},

}

Seamless Satellite-image Synthesis

Jialin Zhu; Tom Kelly

in: Computer Graphics Forum, pp. 193–204, 2021.

@inproceedings{zhu2021seamless,

title = {Seamless Satellite-image Synthesis},

author = {Jialin Zhu and Tom Kelly},

doi = {https://doi.org/10.1111/cgf.14413},

year = {2021},

date = {2021-01-01},

booktitle = {Computer Graphics Forum},

volume = {40},

number = {7},

pages = {193–204},

}

CityEngine: An Introduction to Rule-Based Modeling

Tom Kelly

in: Urban Informatics, pp. 637–662, Springer, 2021.

@incollection{2021cityengine,

title = {CityEngine: An Introduction to Rule-Based Modeling},

author = {Tom Kelly},

doi = {https://doi.org/10.1007/978},

year = {2021},

date = {2021-01-01},

booktitle = {Urban Informatics},

pages = {637–662},

publisher = {Springer},

}

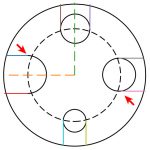

SketchGen: Generating Constrained CAD Sketches

Wamiq Reyaz Para; Shariq Farooq Bhat; Paul Guerrero; Tom Kelly; Niloy Mitra; Leonidas Guibas; Peter Wonka

in: Advances in Neural Information Processing Systems, vol. 34, pp. 5077–5088, 2021.

@article{para2021sketchgen,

title = {SketchGen: Generating Constrained CAD Sketches},

author = {Wamiq Reyaz Para and Shariq Farooq Bhat and Paul Guerrero and Tom Kelly and Niloy Mitra and Leonidas Guibas and Peter Wonka},

doi = {https://proceedings.neurips.cc/paper/2021/hash/28891cb4ab421830acc36b1f5fd6c91e-Abstract.html},

year = {2021},

date = {2021-01-01},

journal = {Advances in Neural Information Processing Systems},

volume = {34},

pages = {5077--5088},

}

Yiangos Georgiou; Melinos Averkiou; Tom Kelly; Evangelos Kalogerakis

in: 2021 International Conference on 3D Vision (3DV), pp. 1034–1043, IEEE 2021.

@inproceedings{georgiou2021projective,

title = {Projective Urban Texturing},

author = {Yiangos Georgiou and Melinos Averkiou and Tom Kelly and Evangelos Kalogerakis},

doi = {https://dx.doi.org/10.1109/3DV53792.2021.00111},

year = {2021},

date = {2021-01-01},

booktitle = {2021 International Conference on 3D Vision (3DV)},

pages = {1034–1043},

organization = {IEEE},

}

A Differentiable CGA Shape Procedural System

T Kelly; V Ceballos Inza; Y Yang

in: Proceedings of the 16th ACM SIGGRAPH European Conference on Visual Media Production, CVMP 2019.

@inproceedings{kelly2019differentiable,

title = {A Differentiable CGA Shape Procedural System},

author = {T Kelly and V Ceballos Inza and Y Yang},

url = {https://www.cvmp-conference.org/files/2019/short/35.pdf},

year = {2019},

date = {2019-01-01},

booktitle = {Proceedings of the 16th ACM SIGGRAPH European Conference on Visual Media Production},

organization = {CVMP},

}

Simplifying Urban Data Fusion with BigSUR

Tom Kelly; Niloy Mitra

Architecture Media Politics Society, 2018.

@conference{littlesur,

title = {Simplifying Urban Data Fusion with BigSUR},

author = {Tom Kelly and Niloy Mitra},

url = {https://architecturemps.com/wp-content/uploads/2018/04/Tom_Kelly_and_Niloy_Mitra_Simplifying-Urban-Data-Fusion-with-BigSUR_Abstract-ADU.pdf},

doi = {https://arxiv.org/pdf/1807.00687},

year = {2018},

date = {2018-07-01},

booktitle = {Architecture Media Politics Society},

}

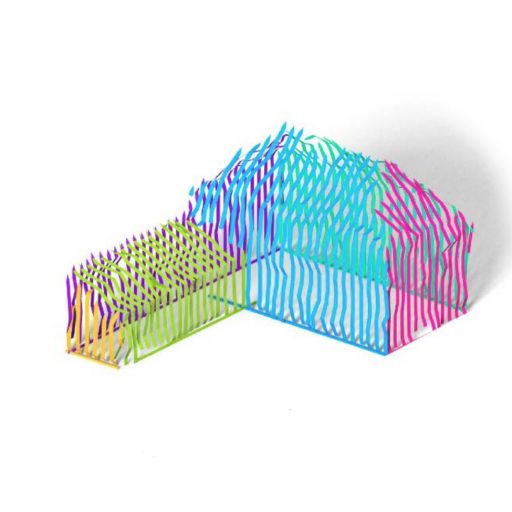

FrankenGAN: guided detail synthesis for building mass-models using style-synchonized GANs

Tom Kelly; Paul Guerrero; Anthony Steed; Peter Wonka; Niloy J Mitra

in: ACM Transactions on Graphics, vol. 37, iss. 6, 2018.

@article{kelly2018frankengan,

title = {FrankenGAN: guided detail synthesis for building mass-models using style-synchonized GANs},

author = {Tom Kelly and Paul Guerrero and Anthony Steed and Peter Wonka and Niloy J Mitra},

doi = {https://dx.doi.org/10.1145/3272127.3275065},

year = {2018},

date = {2018-01-01},

journal = {ACM Transactions on Graphics},

volume = {37},

issue = {6},

}

On Realism of Architectural Procedural Models

J. Beneš; T. Kelly; F. Děchtěrenko; J. Křivánek; P. Müller

in: Computer Graphics Forum, vol. 36, no. 2, pp. 225-234, 2017.

@article{benevs2017realism,

title = {On Realism of Architectural Procedural Models},

author = {J. Beneš and T. Kelly and F. Děchtěrenko and J. Křivánek and P. Müller},

doi = {https://doi.org/10.1111/cgf.13121},

year = {2017},

date = {2017-01-01},

journal = {Computer Graphics Forum},

volume = {36},

number = {2},

pages = {225-234},

}

BigSUR: Large-scale structured urban reconstruction

Tom Kelly; John Femiani; Peter Wonka; Niloy J Mitra

in: ACM Transactions on Graphics, vol. 36, no. 6, 2017.

@article{kelly2017bigsur,

title = {BigSUR: Large-scale structured urban reconstruction},

author = {Tom Kelly and John Femiani and Peter Wonka and Niloy J Mitra},

doi = {https://dx.doi.org/10.1145/3130800.3130823},

year = {2017},

date = {2017-01-01},

journal = {ACM Transactions on Graphics},

volume = {36},

number = {6},

publisher = {Association for Computing Machinery},

}

Interactive Dimensioning of Parametric Models

Tom Kelly; Peter Wonka; Pascal Müller

in: Computer Graphics Forum, vol. 34, no. 2, pp. 117-129, 2015.

@article{kelly2019interactive,

title = {Interactive Dimensioning of Parametric Models},

author = {Tom Kelly and Peter Wonka and Pascal Müller},

doi = {https://dx.doi.org/10.1111/cgf.12546},

year = {2015},

date = {2015-01-01},

journal = {Computer Graphics Forum},

volume = {34},

number = {2},

pages = {117-129},

publisher = {The Eurographics Association and John Wiley & Sons Ltd.},

}

Unwritten Procedural Modeling with the Straight Skeleton

Tom Kelly

University of Glasgow, 2014, (PhD thesis).

@phdthesis{kelly2014unwritten,

title = {Unwritten Procedural Modeling with the Straight Skeleton},

author = {Tom Kelly},

url = {https://theses.gla.ac.uk/4975/},

year = {2014},

date = {2014-01-01},

school = {University of Glasgow},

note = {PhD thesis},

}

Procedural Generation of Parcels in Urban Modeling

Carlos A. Vanegas; Tom Kelly; Basil Weber; Jan Halatsch; Daniel G. Aliaga; Pascal Müller

in: Computer Graphics Forum, vol. 31, no. 2pt3, pp. 681-690, 2012.

@article{vanegas2012procedural,

title = {Procedural Generation of Parcels in Urban Modeling},

author = {Carlos A. Vanegas and Tom Kelly and Basil Weber and Jan Halatsch and Daniel G. Aliaga and Pascal Müller},

doi = {https://doi.org/10.1111/j.1467-8659.2012.03047.x},

year = {2012},

date = {2012-01-01},

journal = {Computer Graphics Forum},

volume = {31},

number = {2pt3},

pages = {681-690},

}

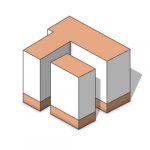

Interactive Architectural Modeling with Procedural Extrusions

Tom Kelly; Peter Wonka

in: ACM Transactions on Graphics (TOG), vol. 30, no. 2, pp. 1–15, 2011.

@article{kelly2011interactive,

title = {Interactive Architectural Modeling with Procedural Extrusions},

author = {Tom Kelly and Peter Wonka},

url = {https://peterwonka.net/Publications/pdfs/2011.TOG.Kelly.ProceduralExtrusions.TechreportVersion.final.pdf},

doi = {https://dx.doi.org/10.1145/1944846.1944854},

year = {2011},

date = {2011-01-01},

journal = {ACM Transactions on Graphics (TOG)},

volume = {30},

number = {2},

pages = {1–15},

publisher = {ACM New York, NY, USA},

}